From idea to intelligent system: a practical guide to the AI development lifecycle

Building AI doesn’t have to be overwhelming if you understand the AI development lifecycle; you can turn complexity into a clear, manageable process.

Content Map

More chaptersAs of 2026, thousands of AI tools and AI-powered services have been introduced to users. This creates immense pressure for companies to keep up with the growing AI race, especially when consumers are now used to AI tools and expecting businesses to always have AI assistance available.

However, with the increasing sophistication of this technology, the development process understandably seems intimidating. The assumption is often that the process is long, complicated, and expensive. This doesn’t have to be the case. As long as you take a structured approach, do enough research, and consult experts, the AI project will be like any other software development project - challenging but gratifying.

Today’s article explains everything you need to know about the AI development lifecycle, breaking each large step down into smaller ones, along with some challenges to be aware of. Let’s dive right in!

Key Takeaways:

- In the age of the AI race, knowing how the AI lifecycle works provides businesses with numerous long-term benefits: minimizing risks and fostering an ethical development process, all while creating a scalable product that has long-lasting, strong performance.

- The main stages of an AI lifecycle entail identifying the main problems, collecting and preparing data, designing and training data, before evaluating and deploying the final product. However, to ensure the model’s accuracy and relevance, it needs constant data updates and retraining.

- Challenges involving the development process often relate to the data quality and infrastructure requirements.

Why is it important to understand the AI development lifecycle?

Do you really need to know all the steps before jumping into an AI development project? The short and long answers are both yes. There are long-term benefits to doing careful homework before the actual project begins.

Reducing risks and ensuring ethical AI development

Seeing how AI moves from idea to production allows you to implement efficient risk management strategies. This means:

- Integrate security throughout the AI lifecycle (e.g., input validation, adversarial testing, secure deployment, real-time monitoring) to mitigate threats before they compromise AI systems.

- Ensure your AI system is fair, accountable, and operates transparently.

- Reduce risks such as bias, harmful outputs, and privacy violations.

- Enable investigation and correction when errors or failures occur.

- Align AI systems with legal and regulatory requirements.

In short, reducing risks is not only about safeguarding the data but also about fostering transparency and an ethical AI tool.

Improving development efficiency and controlling costs

Grasping the AI development lifecycle means you can plan ahead to optimize costs. The team can plan resource allocation for each stage of the development process, ensuring there are always enough hands on board, even in scenarios when a team member decides to quit unexpectedly.

The team can also research and consult with experts to determine beforehand which criteria to focus on during each stage, preventing expensive mistakes and rework. This also means putting in place effective monitoring systems based on clear key performance metrics (KPI) and automating repetitive, mundane tasks.

Scalability and production readiness (MLOps)

The AI lifecycle is foundational to effective MLOps, or machine learning operations. MLOps is the key to transforming from an ad-hoc and manual approach to systems that are scalable and production-ready. Having a clear idea of what steps the AI development process entails means the team can:

- Automating repetitive tasks like model training or data preparation through CI/CD.

- Lifecycle awareness highlights the gap between training and production data pipelines. MLOps uses feature stores to address this issue by centralizing reusable features and preventing inconsistencies to accelerate future model development.

- Applying MLOps practices is how the team keeps AI systems reliable even after deployment.

- Last but not least, lifecycle awareness encourages collaboration between ML engineers, data scientists, and operations teams.

Prevents performance decline from model drift

According to IBM, model drift occurs when a machine learning model’s performance declines over time because the underlying data or the relationship between inputs and outputs changes. Also known as model decay, this issue can lead to inaccurate predictions and unreliable decisions if left unaddressed.

AI models are trained on one version of reality, and they solely rely on this version to generate replies to users. However, the world never remains static, and data is constantly changing or updated. AI may not be able to capture all these changes in its model and assume non-existent relationships, resulting in wrong results. One or two mistakes here and there might not seem like a big deal, but in the end, businesses can suffer from faulty business decisions, bias, unfairness, and even legal risks.

Gaining insight into the AI development lifecycle allows teams to employ continuous drift management, such as monitoring data distribution, uncovering the root cause, using statistical analysis tests, and so on.

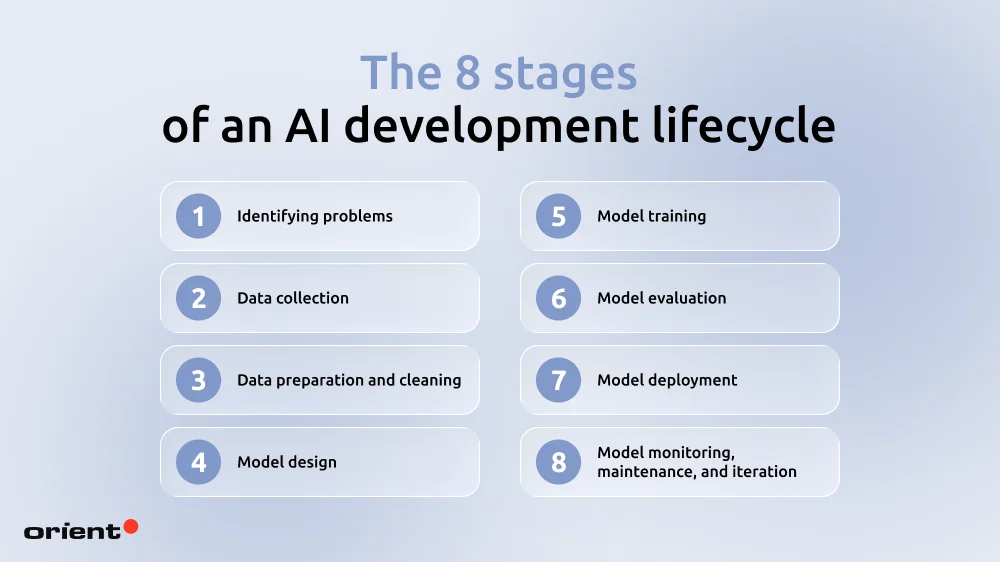

The 8 stages of an AI development lifecycle

Identifying problems

The very first step is what some people call the “exploring” phase. What this means is that before any actual development begins, the team needs to sit down and explore every aspect involving the AI project.

- Scope: What is the specific problem the app is trying to resolve? What is included and excluded from the project’s scope?

- Requirements: What are the requirements of the project? Identify both the functional and non-functional problems. These requirements can be gathered via surveys and interviews.

- Target users: Who are you solving these problems for? This question helps with narrowing the scope and setting clear boundaries.

- Define success: What does success mean to you and your team? Can you translate this definition both quantitatively and qualitatively? Which KPIs will be used to guide the teams throughout the entire development process?

- Risk assessment: What are the risks that come with the AI project? Consider ethical, financial, and reputational risks and consider how they can be mitigated. Don’t leave this step until the very last steps of the project.

- Technical feasibility: From the requirements, define the technical scope, evaluate the tech stack, assess available resources, and the team’s capabilities. Do consider alternatives if the current approach isn’t the most effective one.

- Legal concerns: AI doesn’t have any global regulations yet, but there are still significant rules one needs to be aware of. THE EU AI Act, USA AI Approach, and UK AI Strategy are among recent regulatory systems with the attempt to set strict requirements for high-risk applications. Make sure to go through them carefully or consult experts if you are ever unsure.

Data collection

Data collection is a foundational step for building a successful AI model. AI learns patterns from the data provided to generate the most optimal response; thus, the data needs to be high-quality so the model can make accurate predictions and decisions.

First things first: where do businesses even collect the data from?

AI data collection sources

Data sources can originate from public, private, human interactions, or even synthetic sources.

- User interaction data: Clicks, searches, and app usage that help AI personalize experiences and understand user behavior.

- Web and social data: Content from websites and social platforms, often used for sentiment analysis and trend forecasting.

- Enterprise data: Information from systems like CRM or ERP, used for analytics, fraud detection, and process optimization.

- Sensors and IoT data: Continuous streams from devices like cameras or wearables, powering real-time use cases such as health monitoring and predictive maintenance.

- Public datasets: Open data from governments or research, useful for benchmarking and building AI models.

- Transactional data: Records of payments and orders that support fraud detection, demand forecasting, and personalization.

- Synthetic data: Artificially generated data that helps solve privacy, cost, and data scarcity issues.

Then we move on to the next step: data collection method.

AI data collection method

- Manual entry and labelling: Experts input and annotate data (e.g., medical image labelling), ensuring high-quality, domain-specific datasets.

- Automated data capture: Data is continuously collected from logs, APIs, and systems, keeping datasets up to date in real time.

- Web crawling and scraping: Bots extract large-scale data from websites to train NLP models and recommendation systems (with ethical considerations).

- Sensor-based collection: Real-time data from IoT devices and sensors, supporting use cases like predictive maintenance and health monitoring.

After the collection of data, there are still a few more steps to go through before moving to the next stage of AI development:

- Define clear policies for ownership, access, and retention, with proper documentation of data lineage and versioning.

- Protect sensitive data through anonymization and encryption during both collection and storage.

- Put clear processes in place to collect, track, and manage user consent for data usage in AI systems.

Data preparation and cleaning

Collected raw data is often not used directly for AI training since there are multiple inconsistencies, missing values, or noise that can negatively impact the model’s overall performance.

- Cleaning and preparing data for AI: Raw data is standardized by fixing errors, removing inconsistencies, and addressing bias to ensure accurate and reliable model training.

- Labeling and annotation: Experts tag and classify data to create high-quality ground truth, which directly impacts model accuracy. This is often a time-consuming process that requires human input.

- Data splitting and validation: Data is divided and validated to prevent overfitting, ensure fairness, and enable reliable performance on unseen inputs.

- Data transformation: For machines to actually learn from AI, data needs to be converted into formats AI can understand. Techniques like normalization and standardization put data on a comparable scale to make sure the model doesn’t over-prioritize one variable over another.

- Data augmentation: AI models can learn better from a diverse data set. Data augmentation can help companies achieve this by creating variations of existing data. For example, in image-based AI, a single photo can be flipped, rotated, or adjusted to simulate different scenarios.

- Creating data pipelines: Set up scalable, repeatable pipelines to automate data collection, cleaning, and validation, ensuring consistent quality and faster model development.

Model design

The fourth step is selecting a suitable AI model. This comes down to your computational resources, use case, and training data.

While there are a huge number of algorithms and architectures, the biggest and fanciest ones aren’t always the right answer; you need to carefully consider the problem type and real-world application. Here are a few examples:

- Let’s say you want your AI model to be able to detect fraud. This calls for an architecture called logistic regression, whose primary purpose is binary classification.

- Suppose you want an AI that can do retail basket analysis (“Which products are commonly purchased with diapers?”). You’ll need an AI that can find relationships called Association Rules (Apriori).

- If you want to automate aspects of code writing and debugging, models like GPT-5 or Claude 4 are the types of architecture you need.

In short, outline as clearly as possible the problem you’re trying to solve. Sometimes, conventional learning models can perform a task perfectly.

Training a generative model from scratch isn’t only extremely costly, but it also takes a long time. It is often recommended to use pretrained models and fine-tune them as you go. However, this is not to say that there is a universal pretrained model, as there are multiple sizes and architectures involved in ready-made ones. You can also combine multiple models if it’s appropriate, and remember to integrate security measures from this very step.

Model training

All the preparation leads to the step where you actually build the AI: model training. In this step, the model is exposed to the prepared data, where it learns to identify patterns and data relationships while it also adjusts parameters to produce the most accurate response.

The AI training process directly impacts the quality of AI’s responses; hence, it is important to address the following problems:

- Underfitting: Underfitting happens when a model is too simple to capture the underlying patterns in the data. This usually occurs when the model lacks complexity or isn’t trained long enough. As a result, it performs poorly across the board, showing high error on both training data and new, unseen data because it hasn’t learned enough from the data.

- Overfitting: On the other hand, when a model becomes too complex and starts memorizing noise in the training data instead of learning the actual patterns, the model has problems with overfitting. This usually happens when the model is too powerful for the dataset or trained for too long. As a result, it performs extremely well on training data but fails on new, unseen data, making it unreliable in real-world use.

- Version checkpoint: The training of AI models is rarely a linear process. It’s best to save checkpoints during various stages of AI training. Without version control, it could be disastrous if one update goes wrong and the entire team needs to start over again.

Model evaluation

Evaluating and testing the model is a crucial step. It tests how well the model generalizes new, unseen data. This is often done with a completely new dataset to evaluate how well the model performs, using a number of metrics based on specific problems and business goals. Here are a few examples.

Metrics involving classification (prediction metrics):

- Accuracy: Overall correctness, but can be misleading with imbalanced data.

- Precision: How many predicted positives are actually correct (important when false positives are costly).

- F1 Score: Balances precision and recall into a single metric.

- Log Loss: Measures how confident and accurate predictions are

Some regression metrics include:

- MAE (Mean Absolute Error): Average prediction error, easy to understand.

- MSE / RMSE: Penalize larger errors more heavily (RMSE is easier to interpret).

In addition to these metrics, the model also goes through a number of other rigorous tests, like performance testing, A/B testing, end-to-end tests, integration tests, and more.

Model deployment

Once the evaluation is satisfactory, it is time to move the AI model to the production environment, where it will solve problems in the real world. An important decision to make involves the deployment model of the AI model.

- On-device deployment: Models run directly on smartphones or laptops.

- On-premise deployment: Models run on your own infrastructure.

- Cloud deployment: Models run on third-party cloud platforms.

- Edge deployment: Models are deployed on distributed devices (e.g., IoT or sensors).

Choosing the deployment environment isn’t the end of this step. Teams also need to pay attention to:

- Ensure the model’s performance under high load.

- Maintain version control for deployed models and enable safe rollbacks to quickly recover from performance issues.

- Creating thorough documentation to facilitate knowledge transfer.

- Continuously monitor the model’s performance and flag any anomalies. This can also be done by setting automatic alarms.

Model monitoring, maintenance, and iteration

Deploying doesn’t mean you never need to train the AI model again. While monitoring the model’s performance, companies also need to:

- Establish systems to gather users’ feedback.

- Train the models with new data to retain their accuracy and relevance.

- Monitor the model for any performance degradation or model drift and regularly retrain and validate data.

- Ensure ethical use by continuously monitoring AI systems to ensure they operate responsibly and address any ethical issues as they arise.

- Scale AI use cases and integrations over time, while proactively managing new risks and ethical considerations.

Above are the main steps of the AI development lifecycle. Following this framework mitigates risk while creating a high-performing and scalable AI model.

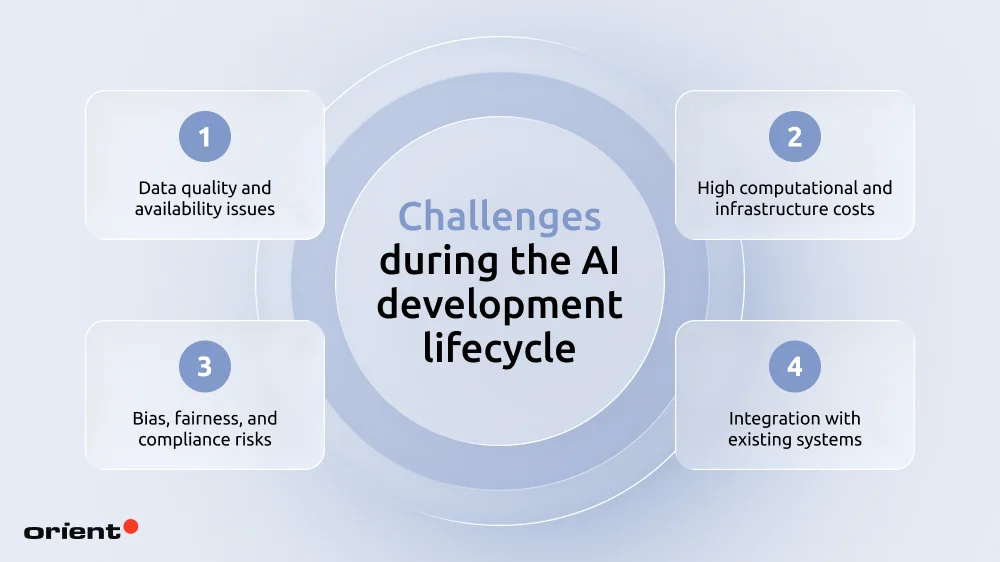

Challenges during the AI development lifecycle (and how to solve them)

This article won’t dig too deep into all the challenges during the development process, but here are some of the most common problems teams run into and possible solutions for them.

- Data quality and availability issues: Siloed, inconsistent, or low-quality data leads to unreliable outputs, making even advanced models ineffective without proper data governance and cleaning.

Solution: Standardize and clean data through governance frameworks, automated pipelines, and regular audits.

- High computational and infrastructure costs: AI, especially LLMs, demands heavy GPU and infrastructure investment.

Solution: Optimize and go cloud-first. Use cloud infrastructure, model optimization (e.g., pruning, quantization), and hybrid setups to reduce compute costs without sacrificing performance.

- Bias, fairness, and compliance risks: Models can inherit bias from historical data and face increasing regulatory pressure.

Solution: Implement responsible AI practices. Use diverse datasets, run bias audits, and adopt governance frameworks.

- Integration with existing systems: Legacy infrastructure often can’t support modern AI.

Solution: Use API-first and phased modernization. Connect AI with legacy systems via APIs, middleware, or hybrid architectures instead of full system overhauls.

Master the AI development lifecycle with Orient Software

Understanding the AI development lifecycle gives you a clear roadmap to follow, whether you are starting out on your project or scaling the current AI solution. After all, organizations that master the AI development lifecycle will be better positioned to adapt and stay ahead in an increasingly competitive, AI-driven market.

Another way to speed up your development lifecycle even further: have Orient Software as your partner. With 2 decades of experience and dozens of successful projects, we are confident that we can bring your visions to reality. Contact us today!